Introduction

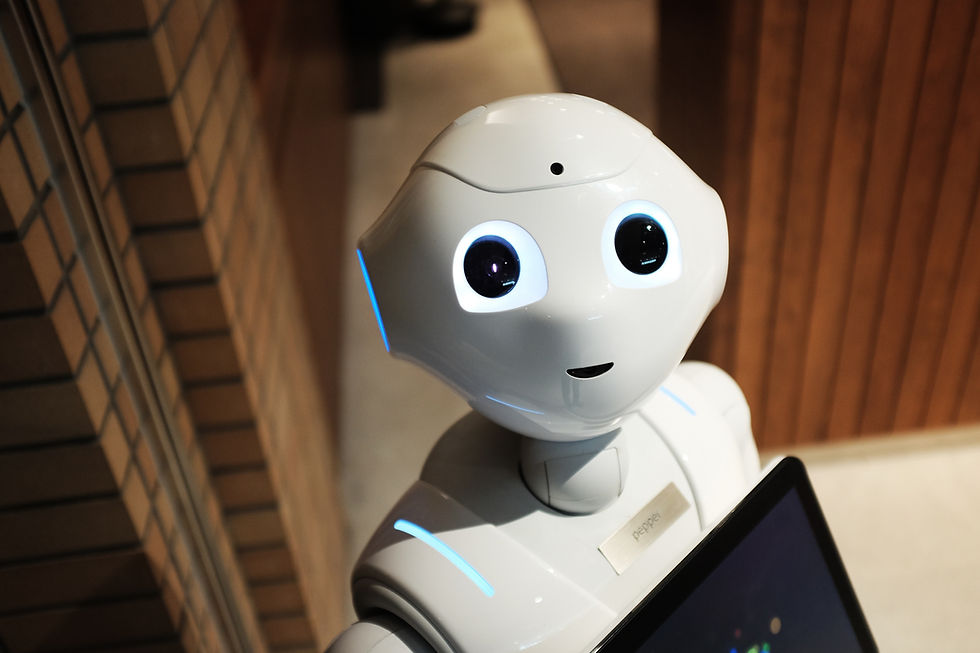

In the modern era, we are witnessing an unprecedented integration of Artificial Intelligence (AI) into our daily routines. It's not just about the tech-savvy individuals or industries anymore; AI is penetrating every aspect of our lives, revolutionizing the ways we work, communicate, and entertain ourselves. It's an exciting time, with advancements happening at a rapid pace. But alongside this wave of innovation, there are also serious ethical concerns that need to be addressed.

AI, with its tremendous capability to process and analyze large volumes of data, has the potential to drastically improve efficiency across multiple sectors. However, this very capability presents a challenge to individual privacy rights. In an era where data is the new currency, how do we protect individual information from being exploited?

Another ethical issue is the potential for bias in AI systems. AI algorithms are only as unbiased as the data they are trained on. If the training data contains biases, the AI will likely reproduce or even amplify these biases, leading to unfair outcomes.

Furthermore, as AI systems become more sophisticated, there's a looming concern about job displacement. While AI can certainly automate mundane tasks and free up human time for more creative endeavors, the transition might not be smooth for all sectors or for all workers.

In this blog post, we will delve into these three key ethical implications of AI: privacy concerns, bias in AI, and job displacement. We aim to raise awareness and provoke thoughtful discussion on these important issues.

Privacy Concerns

A most pressing concern that has emerged with the advancement of artificial intelligence (AI) technology is the potential violation of privacy, a key ethical implication that merits serious consideration. In order to function effectively and provide the expected results, AI systems often rely heavily on the processing of large amounts of data, a significant portion of which may be personal or sensitive in nature.

For instance, AI voice assistants, a common technology many people interact with on a daily basis, need to constantly listen to our conversations and interpret our commands to perform their tasks. However, this constant monitoring and analysis of our dialogues opens up a profound possibility of unwanted surveillance, a potentially alarming breach of privacy that can occur without the user's knowledge or consent.

This situation presents a multitude of questions and issues that need to be addressed. Foremost among these is the issue of consent - how can we ensure that users are fully informed about the extent and implications of the data collection that AI implies and that they willingly agree to it? Furthermore, considerations about the proper utilization of data become paramount - how can we make sure that the collected data is used in a responsible manner, in line with the user's expectations and without infringing on their privacy?

Additionally, the looming risk of potential data breaches or misuse of data is a critical concern. As AI systems become more integrated into our lives, the potential for data to fall into the wrong hands or to be misused increases. How can we protect against these risks and ensure that the data collected remains safe and secure? All these questions underscore the need for continued attention and thoughtful discussion on the ethical implications of AI, especially in terms of privacy.

Bias in AI

Another significant ethical concern that has emerged in the era of artificial intelligence is the potential for these systems to perpetuate or even amplify the existing biases present in human society. The root of this issue lies in the fact that AI systems are trained on data that is generated by human activities, which inevitably carry our conscious and unconscious biases.

As these systems learn and evolve from the data they are fed, they can inadvertently absorb and replicate these human biases. This is a particularly alarming prospect as it can lead to discriminatory outcomes across various critical sectors of society. For instance, in the hiring process, a bias-infused AI could unfairly disqualify candidates based on race, gender, or age. Similarly, in law enforcement, an AI system could disproportionately target certain ethnic or social groups, leading to unjust treatment. In the financial sector, credit scoring algorithms could unfairly disadvantage certain demographics, further widening the economic disparity.

Given these potential ramifications, it becomes absolutely crucial for us to ensure a high level of transparency in the way AI algorithms function. This includes understanding the decision-making process of AI, the variables it considers, and how it weighs different factors. Furthermore, we must strive to create, use, and promote representative and unbiased data sets for training AI systems. These steps will not only help us mitigate the risks associated with AI but also enable us to harness its potential in a fair and equitable manner.

Job Displacement

Artificial Intelligence, with its remarkable ability to automate tasks that were once considered the exclusive domain of humans, has undoubtedly brought to the forefront concerns about potential job displacement. As we continue to witness the robust integration of AI across various sectors, the impact on our workforce becomes a topic of indispensable discussion.

While the capabilities of AI can increase efficiency and productivity exponentially, it simultaneously poses the risk of leading to job losses across an array of sectors. This isn't limited to just sectors like manufacturing and customer service, traditionally associated with automation. Even sectors such as healthcare, which are generally considered to require a human touch, are now facing the prospects of automation.

The implications of AI and automation on job displacement raises profound ethical questions. Central among these is how to ensure the equitable distribution of the benefits of AI. As we stride towards a future increasingly reliant on AI, it is crucial to consider how best to support those whose livelihoods are most vulnerable to automation.

In a world rapidly transforming under the influence of AI, these questions demand our attention. They call for comprehensive strategies that not just embrace the advantages of AI, but also provide safety nets for those affected by its potential drawbacks.

Conclusion

Artificial Intelligence (AI) brings about significant ethical implications including privacy concerns, potential bias, and job displacement. AI's ability to process large volumes of data raises issues about individual privacy rights and the risk of data breaches. AI systems can also perpetuate human biases if trained on biased data, leading to discriminatory outcomes. Furthermore, the automation capabilities of AI pose the risk of job losses across various sectors. These ethical implications demand continued attention and thoughtful discussion.

Future Directions with Ethics and AI

In order to address these ethical implications, we need to steer the development of AI in a direction that is ethically conscious and socially responsible. As AI continues to evolve, it is essential that ethical considerations keep pace with technological advancements.

One way to ensure this is through the establishment of robust regulatory frameworks that guide the use and development of AI. These should be designed to protect individual privacy rights, prevent data breaches, and ensure transparency in AI decision-making processes. This could involve guidelines on data usage, limitations on certain AI applications, and requirements for AI transparency and explainability.

In addition, efforts should be made to mitigate bias in AI. This could involve the use of more representative and unbiased training data, as well as the development of AI systems that are designed to recognize and correct for biases. Moreover, regular audits of AI systems could be conducted to detect and rectify any biased outcomes.

Regarding job displacement, it is crucial that we prepare for an AI-integrated future by investing in education and re-skilling initiatives. These should aim to equip individuals with the skills needed to navigate and succeed in an increasingly automated workforce. Additionally, social safety nets could be strengthened to support those affected by job displacement due to AI.

In conclusion, while AI presents a multitude of ethical challenges, it also provides us with an opportunity to shape a future that is ethical, equitable, and beneficial for all. It calls for continuous dialogue, thoughtful action, and collaborative efforts from all stakeholders - policymakers, technologists, and society at large.

Comments